- The AI labs and the consulting firms have, in the last twelve months, placed roughly $12 billion of committed capital behind a single thesis: that the binding constraint on enterprise AI value is deployment, not models.

- The shorthand for the operator of this new model — "Forward Deployed Engineer" — is misleading. Read narrowly, it looks like a relabelled senior consultant. Read properly, it is the operator of an economic model that dismantles three revenue pools simultaneously: standardised product, customisation services, and managed maintenance.

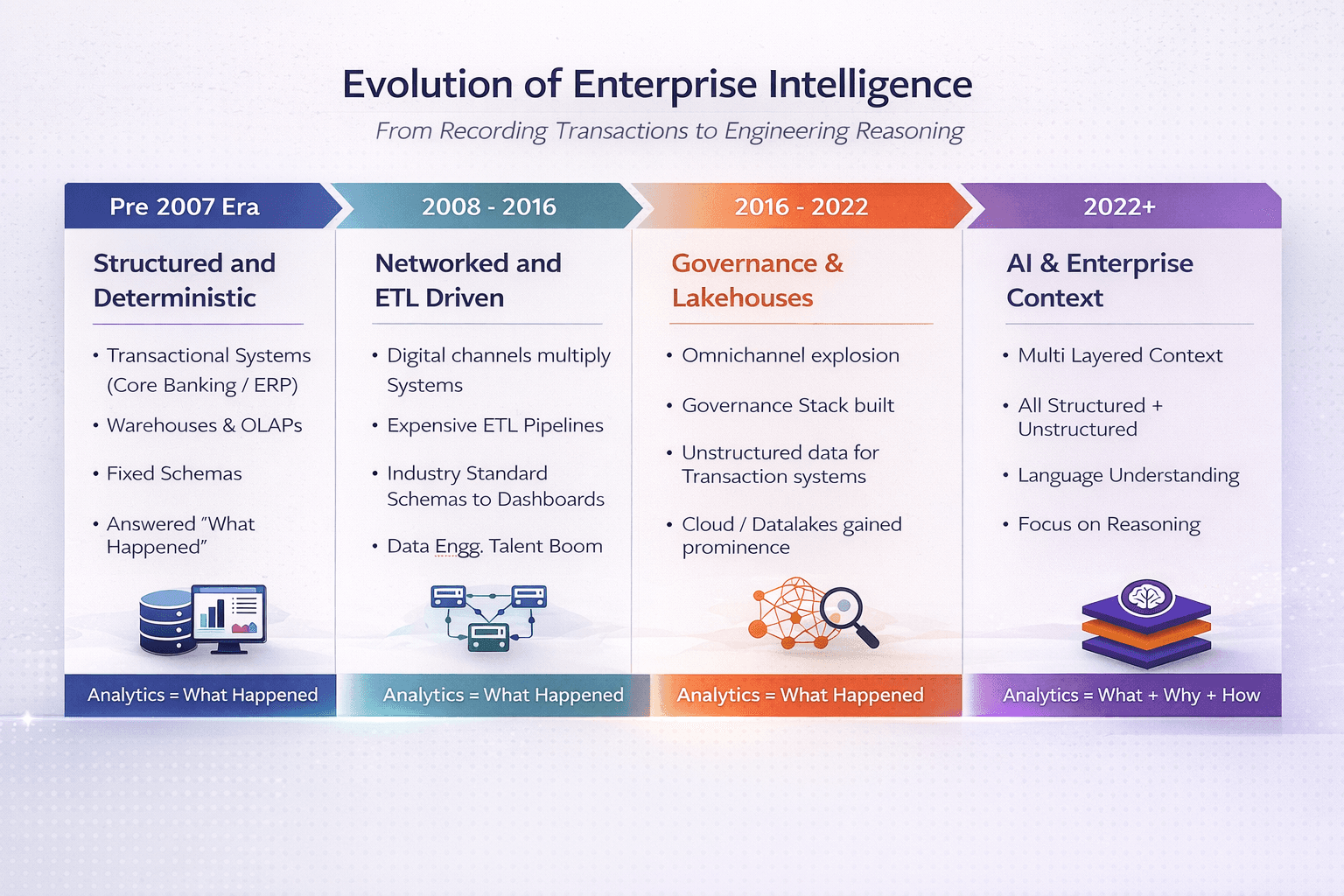

- Decision-making software (regulatory reporting, underwriting, demand forecasting, dynamic pricing, exception handling) is in AI's path. Systems of record (GL, transaction processing, KYC, audit trail) are not.

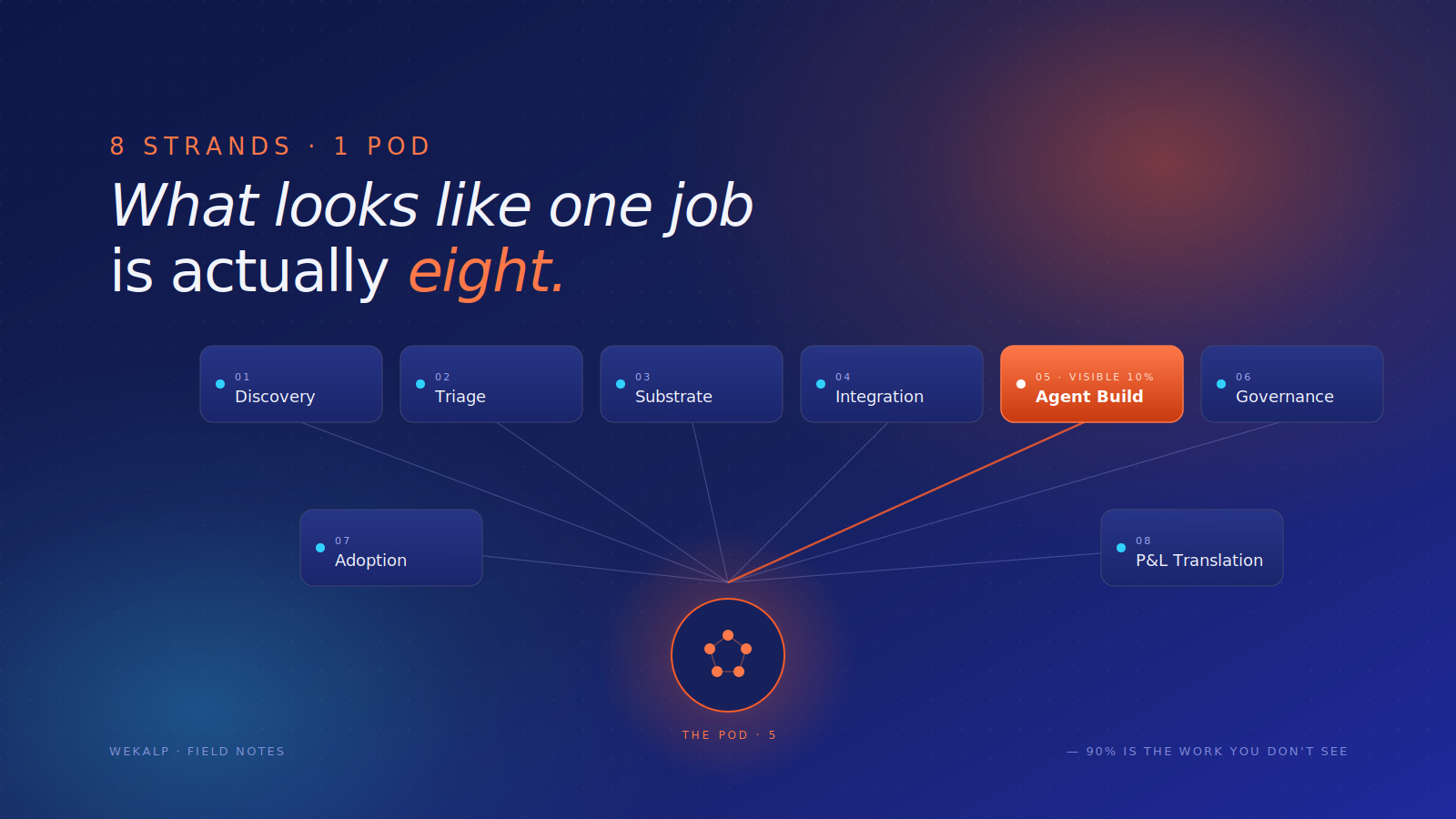

- Building the agent is one of eight strands of work in a credible engagement. The other seven — discovery, triage, substrate, integration, governance, adoption, P&L translation, platform feedback — determine whether the outcome scales.

- The category will be visible in gross margin within 24–36 months. Firms that staff for agents lose. Firms that staff for outcomes, with a reusable platform underneath, compound.

A bet has just been placed

In the last month itself the AI labs and the consulting firms have done something they almost never do. They have agreed.

- OpenAI Deployment Company — a $10 billion vehicle anchored by TPG, Advent, Bain Capital, Brookfield, McKinsey, Bain & Company and Capgemini, with roughly 150 Forward Deployed Engineers acquired through Tomoro.

- Anthropic's enterprise JV — $1.5 billion, with Blackstone, Hellman & Friedman and Goldman Sachs as founding partners.

- McKinsey × Wonderful and McKinsey × Google Cloud (the McKinsey Google Transformation Group) — tying QuantumBlack to AI-native FDE pods on both the model-lab side and the hyperscaler side.

Different sponsors, different vehicles, the same underlying bet: the binding constraint on enterprise AI value is not the model, but the deployment of the model into the messy reality of the customer.

The shorthand for the operator of this model is "Forward Deployed Engineer" (FDE). The shorthand is misleading. Read narrowly, it describes an AI engineer with client-facing polish who shows up at the customer site and ships agents. In reality, it is the operator of an economic model that dismantles three of the largest revenue pools in enterprise IT: standardised product, customisation services, and managed maintenance.

The three-pool model — and how AI dismantles each

To see why the bet is being placed now, and with that much capital, it helps to understand how the current generation of software actually got sold. The model has three pieces — three revenue pools, each structured around assumptions that AI now undermines. The labs and the consulting firms are not going after one of them; they are going after all three at once.

| Pool | Today's economics | What AI changes |

|---|---|---|

| Product / Platform | Standardisation is the moat; customisation prohibitively expensive | Customised solutions buildable in weeks, not months or years |

| Implementation services | Assumptions box the scope; change requests above the line | Continuous absorption of business change |

| Managed services | L1–L4 tower with headcount leverage on standardised infrastructure | Agents handle ticket volume; humans handle exceptions |

Revenue Pool 1 · Product or platform

A mid-sized bank that wants to modernise regulatory reporting today has roughly two options.

- It can buy a packaged product — Regnology, Wolters Kluwer OneSumX, AxiomSL — which arrives with a library of pre-built returns, a standard data model, and the promise of going live in a couple of quarters.

- Or it can build on a platform — Snowflake or Databricks plus a constellation of orchestration, observability and reporting tools — which can in principle be tailored to anything the regulator asks for, but at the cost of an eighteen-month build with a systems integrator and a substantial internal team.

The same fork exists everywhere. A CPG brand thinking about production planning can buy Kinaxis or o9 as a product, or build on a platform stack. A bank thinking about credit risk can buy a packaged underwriting workflow or build one.

There is a third option — to build on low-code platforms — but these have not changed the underlying economics, so I bucket them under the broader platform category.

The unsaid reality of both plays:

- The product stack promises plug-and-play; the platform stack promises customisation

- Both survive on the same unsaid truth — every business is unique

- Both quietly push either effort or time to make things "business-ready" onto the customer or a third party

What AI changes — the standardisation moat collapses. The original economic logic of standardising reports, data models and workflows was that customisation was prohibitively expensive in human-hour terms. Now, the product bends to the business. BCG reaches the same conclusion from a different direction, calling for work to be redesigned around zero-based, outcome-driven processes rather than around the constraints of standardised packages.

The incumbent objection. SAP (Joule), Oracle (OCI Generative AI), Salesforce (Agentforce), ServiceNow (Now Assist), the core banking vendors and every major SaaS company are incorporating AI to automate user tasks and penetrate existing workflows. Why buy this from a new firm when the existing vendor is putting AI into the product itself? Because the incumbent AI offering reinforces the old model rather than attacking it. AI from the incumbent makes the standard product slightly more capable, but it does not address the gap between standard and customised. McKinsey's State of AI 2025 makes the gap concrete: nearly 80% of organisations now use generative AI in at least one function; only about 5.5% report it contributing more than 5% to enterprise EBIT.

Revenue Pool 2 · Implementation

The bridging work between standard and customised is where the systems integrator's commercial model has always lived, and that model has always depended on a single document: the Statement of Work (SoW).

Every SoW contains a section called "Assumptions" or "Exclusions," built with a singular purpose — to box implementation effort around the price of services — so that:

- anything beyond that box can be taken up as a change request

- the product company keeps its margins clean

- the systems integrator makes future money on the assumptions failing

None of this is malicious; it is rational behaviour for a vendor protecting its commercial interests. To be fair, no project is ever 100% defined at the beginning of implementation, and the vendor cannot reasonably be penalised for incorrect specifications.

Sample SoW assumptions, and what each one actually does

| Assumption clause | What it really means |

|---|---|

| "Customer will provide source data in the agreed structure" | Data quality problems billable as change requests |

| "Customisations beyond standard configuration scoped separately" | The real customisation work is billable as a change request |

| "Process changes after go-live re-scoped as a CR" | Any business shift triggers a renegotiation |

| "Upstream upgrades / schema changes are out of scope" | Downstream impact billed when it arrives |

| "New reports beyond standard library treated as a CR" | The reporting library is a teaser, not a deliverable — and you pay more even for a column addition |

| "Integration with systems not listed is out of scope" | The architecture document is the billing boundary |

What AI changes — billable-hours customisations are replaced with AI-driven builds.

- The exclusions in a traditional SoW existed because the work inside them was expensive in human-hour terms. With AI in the loop and the right context fabric underneath, the same change can be propagated in days or weeks

- The conversation with the customer shifts from "how do we price the change request to respect the budget" to "in how many days can the change be shipped" — or even "can you re-code in the next couple of hours"

- The work that used to live above the line, billable as change requests, collapses into the work the partner is expected to absorb continuously

Revenue Pool 3 · Managed services

The third piece is where the integrator actually makes its sticky money. Every customisation built on top of a standardised product becomes an ongoing maintenance liability:

- Specialised knowledge of the product, and the implementation work-arounds built around it, make the customisation impossible for the customer to maintain internally

- It falls outside the standard release line and complicates the upgrade path for the product vendor

The result is a managed services contract, typically running three to seven years, structured as a tower:

- L1 — Ticketing and incident management

- L2 — Application support, break-fix on the customisations

- L3 — Change requests and minor enhancements

- L4 — Vendor escalation, version upgrades, evergreening

Implementation revenue is one-time and competitive. Managed services revenue is recurring, sticky and high margin. This is the annuity that holds the model together.

What AI changes — the headcount-leveraged model collapses into a multi-agent plus human-in-the-loop model for exceptions.

- L1 and L2 work is, structurally, interpretive work performed by humans on standardised infrastructure — ticket triage, log analysis, root cause hypothesis generation, runbook execution, configuration changes within guardrails. That is precisely what AI agents do well. Managed services do not disappear; the headcount-leveraged model behind them does

- A run-and-maintain function that used to require sixty offshore engineers can plausibly run on a pod of eight, with agents handling the volume and humans handling the exceptions

- Bain's New Growth Equation for Tech Services puts the quantitative version on the table: under a business-as-usual approach, the global technology services industry could see revenues erode by 30% or more, margins fall by another 200 basis points beyond what has already been lost, and enterprise value drop by 45% to 50% over the next five years. The numbers are large because the disruption is structural, not cyclical

The cumulative effect — outcome-based pricing arrives

When all three pools break together, the consequence is the one that should be keeping enterprise software CFOs awake. Per-seat SaaS, perpetual licenses with maintenance, time-and-materials services and fixed-bid implementations were all built on a world where the cost of delivering software outcomes was high, variable and human-leveraged.

When that cost becomes measurable and AI-leveraged, the buyer stops paying for seats, licenses or engineer-hours. The buyer starts paying for outcomes.

Bain estimates that in three years, many routine digital tasks will shift from "human plus app" to "AI agent plus API," and that SaaS providers will have to pivot from seat-based to outcome-based pricing to survive. The pricing model breaks.

Why most enterprises will not build this in-house

A reasonable CXO will ask, at this point, whether all of this can simply be built in-house. If AI makes customisation cheap, why bring in an external partner at all?

Some will try. A handful will succeed — typically large banks, large telcos and large retailers with strong existing data engineering capability and the patience to absorb early failures. Most will run into four problems.

- Business focus. However much technology adoption happens inside a non-software enterprise, the business is not technology adoption. The business is either giving out loans or selling consumer goods, and that has to remain the focus.

- Skill scarcity and attrition. Enterprises will not take on the business risk of unavailable skill sets keeping mission-critical technology up and running. Hiring well, and engineering robust knowledge transfer, is the bread and butter of software services firms — not banks or retailers.

- The systems of record do not go away. The core banking platform, the SAP instance, the OMS, the DMS, the POS estate — these still have to be integrated to, written into, reconciled against. An in-house AI team without deep working relationships with the teams that own those systems gets blocked.

- Dependency on a moving external environment. Model providers change pricing and capability quarterly. Evaluation methodologies shift. Regulators issue new circulars on AI use. Context engineering — the discipline of deciding what the model sees, in what order, with what provenance and at what freshness — is six months old as a named field. The opportunity cost of building the team internally is a two-year delay during which competitors with external partners pull ahead.

The relevant question is not build versus buy. It is what kind of external partner. A traditional SI selling a headcount-leveraged services contract is not the answer anymore. An AI-native firm that has industrialised a different operating model — and accumulates platform leverage across engagements — is closer to it.

With this backdrop, let us redefine the FDE

The narrow definition — "AI engineer who shows up at the customer site and builds agents" — is the natural definition. But building agents is the last 10% of the work. The 90% that precedes it is what determines whether the engagement produces an outcome the customer will pay for or a demo that stalls in pilot.

The eight strands of work for the FDE

A credible engagement runs along eight parallel strands. The first four are sequenced. The last four run continuously through the engagement's life.

The shape of these strands explains why the AI labs partnered with the consulting firms — and not with traditional systems integrators or pure-play AI shops.

- Six of the eight strands turn on soft skills the consulting industry has spent decades building: stakeholder mapping, workflow archaeology, change management, executive communication, board-level economic translation, and the judgement to say no.

- The seventh — agent design — is technical, but it is increasingly knowledge-and-prompting work rather than ground-up development.

- The eighth — legacy integration — is genuinely engineering: API contracts with core banking, treasury, AML and OMS systems, message queues, idempotency, data residency. This is where consulting firms partner with engineering depth rather than provide it themselves.

The new model needs all three wings in the same pod. That combination does not naturally live inside a product company, and it does not naturally live inside a body shop. It lives inside a consulting-led delivery firm, which is why the capital is going there.

- Discovery — Map the workflow as it actually exists, not as documented — because the documentation is invariably wrong. Stakeholder interviews, decision-point identification, ownership mapping, and a target operating model that names the outcomes, the deterministic systems, the handoff points and the metrics.

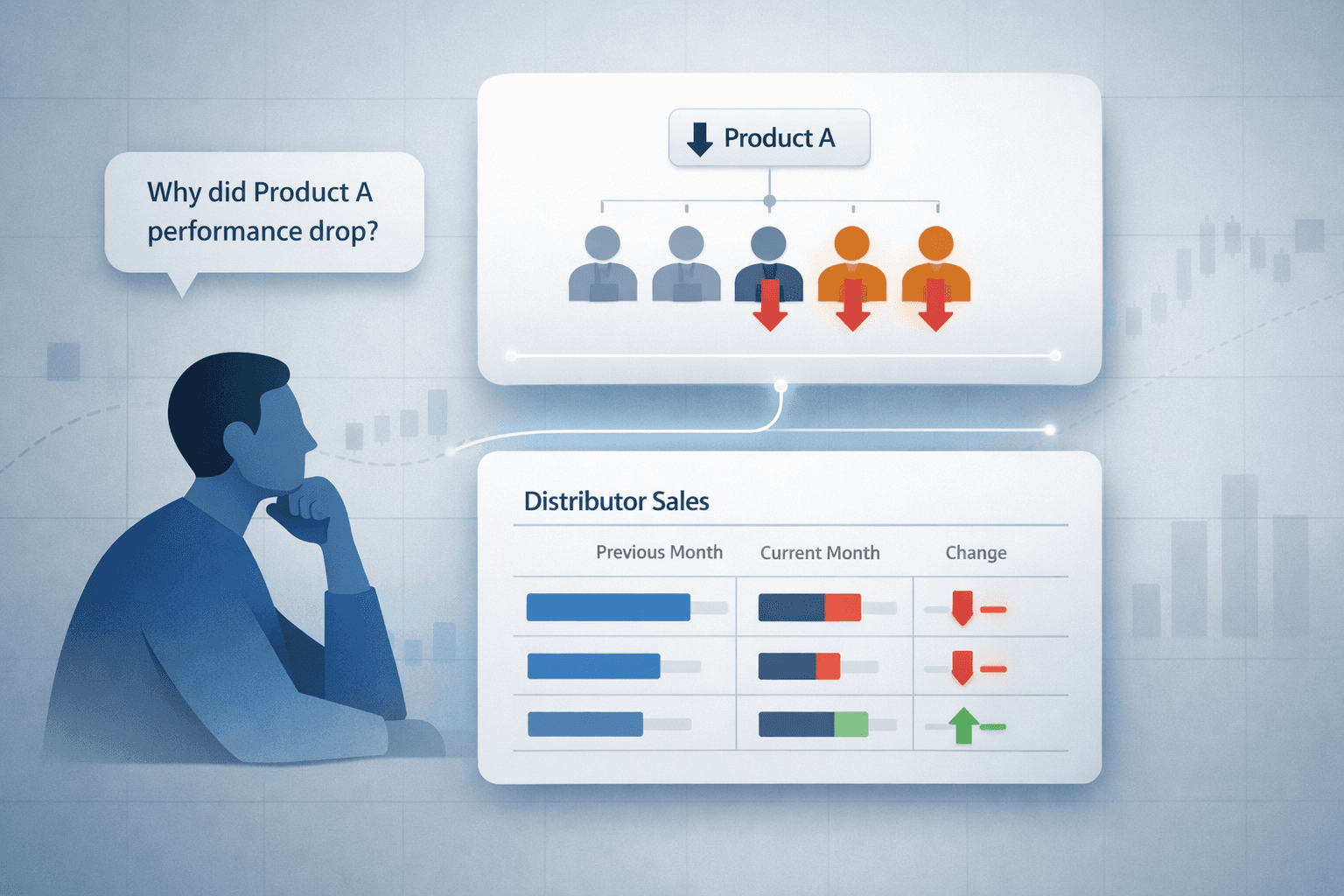

- Triage — Not every step in the workflow should be an agent. The deterministic core (validations, threshold checks, regulator-driven calculations) stays in rules and code. The interpretive layer (synthesis, narrative drafting, exception handling) goes to AI — operating through deterministic tools and hard guardrails. The triage call is the highest-leverage decision in the engagement. Most product-led AI teams cannot make this call because they are paid to ship agents, not outcomes.

- Substrate — Before any agent can run, the data has to be resolvable: entities reconciled across systems, a semantic layer that aligns the master data, freshness SLAs defined per source, context retrievable in a form the agent can trust. This is data engineering, master data management, and context engineering combined — typically the largest single slice of the engagement. It is also the work most AI vendors quietly assume someone else will do, which is why pilots demo well and production rollouts stall.

- Integration — An agent that cannot read from and write to the legacy estate is a parlour trick. The work is integration patterns, message queues, idempotency, retry semantics — plus the political work of getting the team that owns a system of record to agree to a new API contract. It responds to engineers who have done it before and know which battles are worth fighting.

- Agent build — Only at this point does the agent itself get written. This is the strand the narrow FDE definition covers. It is the visible 10% of the work, and on its own it is the easiest of the eight.

- Governance and evaluation — Agents degrade silently if left alone. Continuous evaluation — golden sets per intent, drift detection, human-in-the-loop sampling, escalation protocols, sign-off ladders — is the mechanism by which the agent stays trustworthy as policies, catalogues and language change underneath it. BCG reports AI-related incidents rising 21% year-on-year. Only 10% of companies today let AI agents make decisions autonomously; 35% expect to within three years. The governance gap is about to widen rapidly.

- Adoption and change management — An agent that works technically but is not trusted by the people whose work it touches is a failed engagement. The pod designs the sign-off ladders, the exception protocols, the user enablement programs, the trust dashboards that let humans see what the agent is doing and why. In regulated industries this consumes more time than building the agent itself.

- P&L translation — The senior FDE has to walk into the CFO's office and translate technical work into P&L impact, denominated in the customer's actual operating metrics — cost-to-serve, cycle time, provisioning levels, fraud loss ratios, gross margin per channel, NPS, regulatory finding counts. The CXO is not buying an architecture; they are buying an outcome they can defend in a board meeting. Outcome-based pricing depends entirely on whether the delivery partner can make this translation credibly. Without it, "outcome-based pricing" is a slide.

Every gravel road built in a customer environment is meant to be paved into a reusable highway the next FDE walks down. A firm that does not operate this loop is a body shop. A firm that does becomes a category.

A related and underappreciated part of the job is the judgement to walk away. Senior FDEs in mature firms are the people who tell prospective clients "this engagement will not work because your data is not ready" or "your sponsor is not strong enough to push this through." Saying no to bad engagements is what distinguishes a strategist from an implementer.

From factory to pod

Every one of the eight strands above existed in the old model. They were just distributed across a fifteen-to-twenty person implementation factory followed by an L1/L2/L3/L4 managed services tower, with sequential handoffs and contract boundaries between each stage. AI does not change the work that needs to be delivered. AI changes the operating model that delivers it.

A small pod of five — a senior architect, a data and integration engineer, an applied AI engineer, an embedded SME from the client side, and an engagement lead who owns the commercial and stakeholder relationship — can perform what a fifteen-person implementation team used to do, in a fraction of the time, and absorb change continuously instead of quarterly. The same pod, sized down, runs the engagement in steady state rather than handing off to a managed services tower.

What turns this pod from a competent team into a firm worth backing is what compounds underneath it: reusable connectors and context primitives, accumulated domain patterns, evaluation harnesses and golden datasets, and institutional muscle in legacy integration work that better models cannot commoditise. A firm with that platform delivers customisation at standardisation speed, with gross margins that improve engagement over engagement. A firm without it is a high-end body shop whose margins compress as the agent layer commoditises. The difference will be visible in gross margin within 24 to 36 months — closer to 24 for retail, D2C and B2B SaaS, closer to 36 for BFSI and regulated sectors where adoption is slowed by regulators.

What this means for buyers and investors

For CXOs evaluating an AI partner, the diagnostic is not "show me the agent you will build." It is whether the partner can credibly answer three questions:

- How will you decide which parts of this workflow should not be agents?

- What discovery, data, integration, governance and adoption work are you budgeting for before the agent is written — and how will that work absorb the next business change without a change request?

- Are you willing to put outcome-denominated metrics in the contract rather than effort-denominated ones?

A vendor that flinches at the third question is still operating on the old model, regardless of how much AI is in the demo.

For investors evaluating an AI services or applied-AI firm, the parallel diagnostic is whether the firm is accumulating the four compounding layers above — or selling FDE-hours under a new name. The first compounds. The second commoditises.

In closing

The enterprise software industry was built on a singular premise: standardise the product, hide the real work in assumptions, bill the incremental needs of the business as a change request in perpetuity, and wrap the customisations in a managed services annuity. That premise is now structurally exposed across all three of its revenue streams.

- When customisation is cheap, standardisation stops being a moat.

- When change management is fast, the change request stops being a billing line.

- When agents handle interpretive work on standardised infrastructure, the managed services tower collapses to a pod.

- When the cost of delivering an outcome is measurable, outcome-based pricing stops being a slide and becomes a contract.

The Forward Deployed Engineer, properly defined, is the operator of this new model. The industry that iterated work through change requests is about to be replaced by an industry that iterates on outcomes.

The enterprises that win the next three years are not the ones buying the most agents. They are the ones whose partners understood, on day one, what the agent was actually for — and staffed the engagement accordingly.

References

- State of AI 2025 — Superagency in the workplace (the 80% adoption / 5.5% EBIT impact gap): mckinsey.com/capabilities/tech-and-ai/our-insights/superagency-in-the-workplace

- How Agents Are Accelerating the Next Wave of AI Value Creation (the zero-based, outcome-driven work redesign argument): bcg.com/publications/2025/agents-accelerate-next-wave-of-ai-value-creation

- What Happens When AI Stops Asking Permission? (the 21% YoY rise in AI-related incidents): bcg.com/publications/2025/what-happens-ai-stops-asking-permission

- The Emerging Agentic Enterprise (BCG × MIT SMR; the 10%-today, 35%-in-three-years autonomous-decision stat): sloanreview.mit.edu/projects/the-emerging-agentic-enterprise

- The New Growth Equation for Tech Services (the 30% revenue erosion / 45–50% enterprise value loss projection): bain.com/about/media-center/press-releases/20252/business-as-usual-could-erase-30-of-revenue-for-tech-services-firms

- Will Agentic AI Disrupt SaaS? (the "human plus app → AI agent plus API" shift and the move to outcome-based pricing): bain.com/insights/will-agentic-ai-disrupt-saas-technology-report-2025